IP-AI •

Will Indigenous ways of thinking save AI?

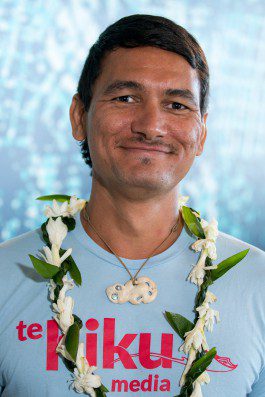

Keoni Mahelona is currently building Te Reo Māori speech recognition tools including text to speech, speech to text, and measuring pronunciation. He attended the March 2019 Indigenous Protocol and Artificial Intelligence workshops in Hawai’i. Here he explores the future of AI.

I rarely blog. Not good at it. In 3rd grade I was put into the special reading class. Reading and writing was never my thing, but I always loved math and science and all disciplines derived from those fundamental subjects.

I’m attending an Indigenous AI workshop in Hawaiʻi. I initially thought this was gonna be a brown nerd meetup 😅 but it’s much better than that. The point is to bring together Indigenous and some non-Indigenous doers, makers, and creators to discuss what Indigenous AI is and how it will play an important role in the future of AI for humanity.

It’s probably best to insert my background here to justify why you should even consider what I have to say on the matter. I won’t do that. Those who know the work I do, which are primarily the communities I serve, know me and respect my whakaaro. That’s important here—community and trust. I’ll try to link that in later (again I’m not a good writer)

So the question I have to answer is “what does the future look like for AI?” I’ll answer this question purely based on what I know now from the work I’ve done over the years in science and engineering as a Kanaka Māoli.

I need to preface that I’ll use machine learning and AI interchangeably. Machine learning is a tool that might lead to artificial intelligence, but I don’t think that will happen. Peter Lucas Jones (also attending the workshop) says it best, “Ko te AI tētahi karetao ka taea e tātou te whakakōrero me te whakakanikani. Mā te whakamahi i o tātou rarāunga me ngā kōrero tuku iho, ka tutuki ngā āhutanga o te karetao.” He’s basically saying AI is a puppet and we make it do what we want using our data and knowledge. Puppet. Until we figure out a way to do AI that isn’t only data driven, I don’t think we’ll reach the singularity.

For me, the future for AI is looking bad. Currently the big corporates (the wealthy, the 1%, the colonizers, etc.) are leading the way in AI. The current technology trends show that you need vast amounts of data and huge computational power to achieve anything close to ‘AI.’ The scales at which AI works are financially unreachable by most people, and I find this terribly frightening—corporates have more power in AI than sovereign nations (that’s nothing new in colonial history—profits drove much of colonization including the overthrow of the Hawaiian Kingdom with the illegal aid of the U.S. Military).

Having said that, a small non-profit, Te Hiku Media, is able to deploy its own speech recognition software in the cloud thanks to services like AWS and open source projects like Mozilla’s DeepSpeech. In this case, machine learning is just another tool to help us do what we need to.

The difference between Te Hiku Media’s ‘A’” and Google’s ‘A’” is that ours is created from our Indigenous language—our data. We collected this data. We look after this data with tikanga (cultural practices and values). We will not allow large corporates to have access to this data and use it to exploit us (e.g. serve us ads, sell our language as a service back to us, read our cultural knowledge, etc.). This data is unique to our people, about 600k Māori, the Indigenous people of Aotearoa. We were able to collect this data because the community that shared it with us trusts us. We’ve worked with the community and for the community for the last 30 years. Our data is what makes us unique. It is our own ‘AI,’ the puppet we’ve created to help us achieve our goals and aspirations as a people revitalizing our reo.

This is where data sovereignty—privacy and guardianship over individual data and the data of groups of people—is critically important. If we can maintain that sovereignty, we can prevent the 1% from further colonizing us. But I see the opposite happening. Global corporates like Lionbridge are soliciting Indigenous people to sell them their language—they’ll pay you USD$45 for 1 hour of your time. They clearly have customers in mind as they’re a globalization and localization company. You see companies like Duolingo and Drops being given our languages for the sake of revitalisation and promotion. And while these companies might be good at heart, they make a profit from selling language services. Do those profits make their way back to our communities from which the language data was taken? Or should we be thanking them as the saviours of our people and they can have our data for free… what ever happened to all our land? Of course the biggest insult comes from DNA companies like Ancestry.com. YOU PAY THEM to GIVE THEM YOUR GENETIC DATA, and they have the right to use it as they deem fit. Read the terms and conditions whānau! AI is very much about our data and our knowledge.

Don’t get me wrong. I know society as a whole could benefit when we share genetic data, when we open source knowledge, and when we put data in the public domain. But in a world with so much inequality, racism, genocide, the list goes on and on, clearly only the wealthy are to benefit from these ‘public’ goods and services.

I wish AI could change the balance of power, but I can’t imagine that happening anytime soon. It’s possible that a technological revolution could do the trick. If/when quantum computers (or some computationally equivalent tech) exist at the consumer level, that could give the 99% similar power to the 1%. But history dictates that the technology itself isn’t enough to ‘do good.’ We need laws and ethics around the technology that guides its use for the benefit of all of humanity (and the planet) and not just the wealthy, pale, stale, and males. Chief Sitting Bull made such a keen observation in the 19th century that still stands today, “the white man knows how to make everything, but he does not know how to distribute it.” He said this on reflection of the white man’s neglect for their poor. With all the Western wealth and technologies in 2019, we still can’t solve such a basic problem as poverty.

Western science is only just recognizing how Indigenous knowledge can help our planet, especially in the face of environmental destruction and climate change. I believe how Indigenous people look after their data and knowledge could also help form a framework for AI that works in the best interest of everything contained within our solar system. We personified land and water not because we were hedonistic, demigod worshipers, but because these personifications allowed us to maintain a level of respect and responsibility toward our environments.

I think AI will reaffirm Indigenous knowledge especially around the fringes of science. For example, how are humans affected by the moon, māramataka? There’s a huge body of traditional knowledge around that and while western science might call this new age mumble jumble (thanks hippies!), the data I’ve observed—people around me have cycles of behavior aligning with the lunar cycle—is enough for me to say, hey, how could we measure these behaviors and use them to predict patterns? Machine learning could help us understand from a western perspective some of what we know already know in an Indigenous context.

For an AI to not be a puppet, I think it needs to be able to do something as basic as caring for the poor without being forced to do so. It’s one thing to force people to pay taxes and another for people to fundamentally understand the value and joy in paying taxes in a civilised society. I live in New Zealand. I do enjoy paying taxes because I know it means I get free health care and it helps with the conservation and protection of New Zealand ecosystems. I would not enjoy paying taxes in the U.S. because it funds genocide, colonisation, and the wealthy.

But what creates that difference between being forced to do good and having joy in doing good? I suppose that’s nurture. How we grow up, the people we are surrounded by, and the communities we belong to all come together to shape why we do the things we do. We’re a reflection of our environment, or rather the data we’re exposed to determines whether we want to do the things we do or whether we’re forced to do the things we do. If this is the case, I do not trust the Big Five to build AI, and I do not trust countries like the US and China to build AI. I’d really only trust an AI coming from my own people and the communities of which I am apart. Huh, I’d say the same is true for humans I trust.

References

Jones, P. L. peterlucasjones. (n.d.). Twitter. Retrieved November 11, 2019, from twitter.com/peterlucasjones.

kōreromāori.io. (n.d.). Retrieved November 11, 2019, from koreromaori.io.

Mahelona, K. (n.d.). Keoni Mahelona - CTO - Te Hiku Media [LinkedIn profile]. Retrieved November 11, 2019, from linkedin.com/in/kmahelona.

Mahelona, K. (2019, February 27). Will Indigenous ways of thinking save AI? Medium. Retrieved from medium.com/@mahelona/what-does-the-future-look-like-for-ai-1ffdff620395.

Mozilla/DeepSpeech: A TensorFlow implementation of Baidu’s DeepSpeech architecture [repository]. (n.d.). GitHub. Retrieved November 11, 2019, from github.com/mozilla/DeepSpeech.

Search results for ‘keoni mahelona’ [webpage]. (n.d.). Te Hiku Media. Retrieved November 11, 2019, from tehiku.nz/search?q=keoni%20mahelona.

Te Hiku Media. (n.d.). Retrieved November 11, 2019, from tehiku.nz.

Author Bio:

Keoni Mahelona is currently building Te Reo Māori speech recognition tools including text to speech, speech to text, and measuring pronunciation. Mahelona’s main roles are project management and web development, primarily for koreromaori.com and koreromaori.io. They also built the indigenous media platform tehiku.nz which serves as a digital Marae for Te Hiku Media and the five Iwi of Muriwhenua. Their key contribution is the Kaitiakitanga License which serves to guard Indigenous data and IP from misuse while aiming to create opportunities for the advancement of Indigenous peoples.

The Indigenous Protocols and Artificial Intelligence (IP-AI) workshops are founded by Old Ways, New, and the Initiative for Indigenous Futures. This work is funded by the Canadian Institute for Advanced Research (CIFAR), Old Ways, New, the Social Sciences and Humanities Research Council (SSHRC) and the Concordia University Research Chair in Computational Media and the Indigenous Future Imaginary.

IP-AI •

ʻUmeke kāʻeo: (Re)coding AI to ʻĀina

Joel Davison is a Gadigal and Dunghutti man from Sydney Australia. She attended the March 2019 Indigenous Protocol and Artificial Intelligence workshops in Hawai’i. Here she explores the future of AI.

AI today is bound by practicality, talented developers, cutting edge research, specialised hardware and top of the line cyber security, which are all ingredients required to advance simple AI beyond current offerings. This means that the entities with the power to advance AI, those with access to pools of talent and academic connections as well as the funding for hardware and security, are those which already have much more money to invest than what is required to operate as a business. These entities, be they government or private, expect a return on investment, in this way AI advances will always be pushed in a direction that is either profitable or marketable, due to this AI is entwined with automation in our cultural lexicons and it is this connection that often dominates conversation.

If Artificial Intelligence is to replicate human intelligence, then the most direct way to profit off of said intelligence is to exploit its labor value. In this way conversations are often steered towards analysis of labor-value of existing occupations. For example, advances by large tech companies in self-driving cars has every in-tune truck driver eyeing other industries at this point, and we1 can’t2 stop talking3 about4 it5.

The vast majority of these industry shaping moves that are being made are opportunities presented only to the wealthiest organisations on the planet, due to the benefit only being realised at a huge scale thanks to the costs outlined above, talent, research, hardware and security. It simply isn’t feasible for small organisations, potentially social ventures, NGOs or co-ops, to lay stake to a portion of the market without the network and capability to take advantage of the wider market. If the benefit of Artificial Intelligence in this liberal-capitalist frame is the profit earned by extracting more labor-value by reducing the overhead of hiring humans to manually perform tasks, then by the time you have paid the up-front costs for the research, development and specialised manufacturing to begin providing self-driving vehicles as a service, you start to realise that you need to roll out your service on a massive scale to begin to realise the benefits. In this environment Artificial Intelligence becomes a winner takes all venture, where the only participants are those already winning.

However, we have been seeing a shift in this landscape, a move by some of the largest organisations that changes the climate entirely. Having developed their AI and taking their time to scale and implement before they start to see their benefit, these large organisations have started to look for alternate revenue sources for their AI solutions. Most notable of these alternate revenue sources are the AI as a service platforms, such as IBM’s Watson or Google’s Tensor Flow. Suddenly, small organisations can provide the benefit of AI (or at least market that they do) without the tremendous up-front cost of research and specialised hardware. In this we are now seeing many small businesses and startups getting into the game of exploiting the difference in labor value between human intelligence and Artificial Intelligence, this time opening up smaller scales, nooks and crannies in the marketplace to be explored.

In all of these conversations we are only exploring the capital value of simple Artificial Intelligence: it’s the capitalist equivalent of only talking about the ‘why?’ of AI (the answer to which is almost always ‘money’). Little do we explore the impact of simple Artificial Intelligence, we never really ask ‘how?’, and when we do it’s always too late.

In November 2017, The Guardian broke the story of a secret police blacklist employed by the New South Wales Police,6 a “Suspect Targeting Management Plan”, which the NSW Police Commissioner called a “predictive style of policing”. This is kind of low-hanging fruit isn’t it? My intention was to share a couple of cases where organisations hadn’t stopped to ask ‘how?’, or what their impact is, but surely no one on this program even stopped to ask ‘why?’. It doesn’t take a genius to figure out how this goes terribly wrong, hell you don’t even have to look much further than Marvel, who ran a (fantastic, by the way) crossover event by the title of “Civil War 2” which featured at its center the arguments for and against ‘predictive policing’, it’s actually kind of prophetic and I love it so.

*spoiler warning*

The event comes to boiling point when a new Spiderman, Miles Morales7 (A young African American, Puerto Rican man) is accused of murdering Steve Rogers, Captain America in the future. After all of the superheroes have shared their perspectives and opinions and had their brawls, the takeaway from this is the question, ‘is it ever okay to judge someone for something they haven’t done but could do?’, to which the answer is no, you shouldn’t, especially if the current criminal justice system is suited to it and especially if you don’t think very carefully about it. Unfortunately the Australian criminal justice system isn’t suited to it and very clearly the NSW police did not think very carefully about it.

**spoilers over**

‘Okay Joel so you have some comic-books-based opinions on predictive justice, but seriously how bad could it be?’

It gets pretty bad. According to the NSW Police Commissioner Mick Fuller, “here were about 1,800 people subject to an STMP across the state. About 55% of them were Aboriginal”, the youngest of which is a nine year old. Currently Indigenous Australians only make up 3% of the national population, so how is it that we represent such a large portion of this database? Are we really that talented at crime? I mean, do we really commit 17 times more crime than any other Australian ethnicity? Of course not, that’s ridiculous, so how did this AI come up with this list of suspects? The truth is, we don’t know and if you ask the police they wouldn’t know either, the company that they contracted to develop the solution likely don’t know either and don’t care how, they’ve already answered their ‘why?’ (read: money). Most likely the people developing the solution don’t understand how the AI’s learning algorithms work and didn’t think about the kind of training data the AI was trained on before it started working on production data.

‘But Joel, they’d have to have thought pretty hard if they made the AI racist, it’s a machine so it’s impartial to race and ethnicity’, turns out that’s not the case,8 AI more or less come out of the box as racist. This is due to how AI are configured in these projects, to perform better than humans they need to learn more than humans in the narrow field they’re being developed for, which is one of their strengths: they can take a huge set of training data and learn from it very quickly. The data is important, however, and as it so happens the most easily accessible large datasets are user-generated and contain all of their respective prejudices. So it’s important to ask ‘what data set was it trained on?’, in this case definitely existing data on previous arrests and criminal convictions by the Australian Federal Police. ‘Hold on, the data on previous arrest and criminal convictions by the Australian Federal Police reveals a strong recurring prejudice toward the Indigenous population of Australia?’

Imagine my shock.

So now the police have a racist AI that’s populating a confidential list of suspects who are majority Indigenous, who the police are now legally able to arrest before they commit a crime or do anything suspicious. Yeah, the police in 2017 criminalised being Aboriginal. That’s how bad it gets.

I’d love to say this proves the point I was making earlier about the impacts AI can have if we don’t ask ‘how?’ but it’s even worse than that. The fact of the matter is unless we are very careful, AI-as-a-service can be used to intentionally obfuscate the ‘how?’. We don’t know how the NSW police’s AI became a racist, we can make very good educated guesses about training data and configuration, but we don’t know: the AI obfuscates the process by which it came up with its database through its sheer complexity alone. The biggest problem is that in spite of this, the results are still being used with authority. Because it is an AI, a machine that ‘just runs analysis’ all it is doing is giving authority to existing and past prejudices and perpetuating said prejudices, rather than having the ability to challenge them like a human might.

We haven’t been asking of ourselves ‘how?’ and when we don’t, we don’t move forward, we don’t challenge and we don’t change. We just become more efficient and I don’t think that’s the vision anyone who is passionate about AI & Computer Science imagine. If we are to use AI to move our society forward, to make real change instead of just making profit, we need to ask ‘how?’.

References

Clevenger, S. (2019, February 13). Self-driving truck startups TuSimple, Ike attract more investment to fuel development. Transport Topics. Retrieved from ttnews.com/articles/self-driving-truck-startups-tusimple-ike-attract-more-investment-fuel-development.

McGowan, M. (2017, November 10). The Guardian. Retrieved from theguardian.com/australia-news/2017/nov/11/more-than-50-of-those-on-secretive-nsw-police-blacklist-are-aboriginal.

Miles Morales (Earth-1610) [online wiki page]. (n.d.). Marvel Database Fandom Wiki. Retrieved from https://marvel.fandom.com/wiki/Miles_Morales_(Earth-1610).

Murphy, F. (2017, November 17). Truck drivers like me will soon be replaced by automation. You’re next. The Guardian. Retrieved from theguardian.com/commentisfree/2017/nov/17/truck-drivers-automation-tesla-elon-musk.

[Online article]. (2019, February 24). Retrieved from pressreviewer.com/2019/02/24/the-leading-companies-competing-in-the-global-mining-truck-market-industry-forecast-2018-2022/.

Orenstein, W. (2019, February 1). Automated ‘platoons’ of trucks might soon be driving on Minnesota roads. MinnPost. Retrieved from minnpost.com/good-jobs/2019/02/automated-platoons-of-trucks-might-soon-be-driving-on-minnesota-roads/.

Speer, R. (2017, July 13). How to make a racist AI without really trying [Blog post]. Retreived from blog.conceptnet.io/posts/2017/how-to-make-a-racist-ai-without-really-trying/.

Rowe, A. (2018, August 30). The trucking industry’s future: go high tech or go home. Tech.Co. Retrieved from tech.co/news/trucking-industry-future-autonomous-drivers-vr-2018-08.

Welch, D., Coppola, G., & Dawson, C. (2019, February 24). Young CEO of electric vehicle startup Rivian has Amazon riding shotgun. Seattle Times. Retrieved from seattletimes.com/business/young-ceo-of-electric-vehicle-startup-rivian-has-amazon-riding-shotgun/.

1. Walker Orenstein, “Automated ‘platoons’ of trucks might soon be driving on Minnesota roads,” MinnPost, February 1, 2019 <minnpost.com/good-jobs/2019/02/automated-platoons-of-trucks-might-soon-be-driving-on-minnesota-roads/>.

2. Seth Clevenger, “Self-driving truck startups TuSimple, Ike attract more investment to fuel development,” Transport Topics, February 13, 2019 <ttnews.com/articles/self-driving-truck-startups-tusimple-ike-attract-more-investment-fuel-development>.

3. Adam Rowe, “The trucking industry’s future: go high tech or go home,” Tech.Co, August 30, 2018 <tech.co/news/trucking-industry-future-autonomous-drivers-vr-2018-08>.

4. David Welch, Gabrielle Coppola, & Chester Dawson, “Young CEO of electric vehicle startup Rivian has Amazon riding shotgun,” Seattle Times, February 24, 2019 <seattletimes.com/business/young-ceo-of-electric-vehicle-startup-rivian-has-amazon-riding-shotgun/>.

5. Finn Murphy, “Truck drivers like me will soon be replaced by automation. You’re next,” The Guardian, November 17, 2017 <theguardian.com/commentisfree/2017/nov/17/truck-drivers-automation-tesla-elon-musk>.

6.Michael McGowan, “More than 50% of those on secretive NSW police blacklist are Aboriginal,” The Guardian, November 10, 2017 <theguardian.com/australia-news/2017/nov/11/more-than-50-of-those-on-secretive-nsw-police-blacklist-are-aboriginal>.

7. “Miles Morales (Earth-1610),” <marvel.fandom.com/wiki/Miles_Morales_(Earth-1610)>.

8. Robyn Speer, “How to make a racist AI without really trying,” July 13, 2017 <blog.conceptnet.io/posts/2017/how-to-make-a-racist-ai-without-really-trying>.

Author Bio:

Keoni Mahelona is currently building Te Reo Māori speech recognition tools including text to speech, speech to text, and measuring pronunciation. Mahelona’s main roles are project management and web development, primarily for koreromaori.com and koreromaori.io. They also built the indigenous media platform tehiku.nz which serves as a digital Marae for Te Hiku Media and the five Iwi of Muriwhenua. Their key contribution is the Kaitiakitanga License which serves to guard Indigenous data and IP from misuse while aiming to create opportunities for the advancement of Indigenous peoples.

The Indigenous Protocols and Artificial Intelligence (IP-AI) workshops are founded by Old Ways, New, and the Initiative for Indigenous Futures. This work is funded by the Canadian Institute for Advanced Research (CIFAR), Old Ways, New, the Social Sciences and Humanities Research Council (SSHRC) and the Concordia University Research Chair in Computational Media and the Indigenous Future Imaginary.